THE REGEX KING

Jeff Sisson's blog (email me)

Notes on email inference using llamafile

I’ve been avoiding learning deeply about large language models. I’m not totally sure why. It’s at least in part for the same reason other people are cautious about them: they seem bad for the environment, they’re going to pollute the delicate ecosystem of freely authored HTML, scammy people are interested in them. I think I also have a more specific reason I’ve stayed away: they’re not quite free, and haven’t been optimized to be freely runnable on anyone’s computer. There’s something about “you have to make calls to someone else’s paid API” that has actively repelled any interest I might have had in digging deeper.

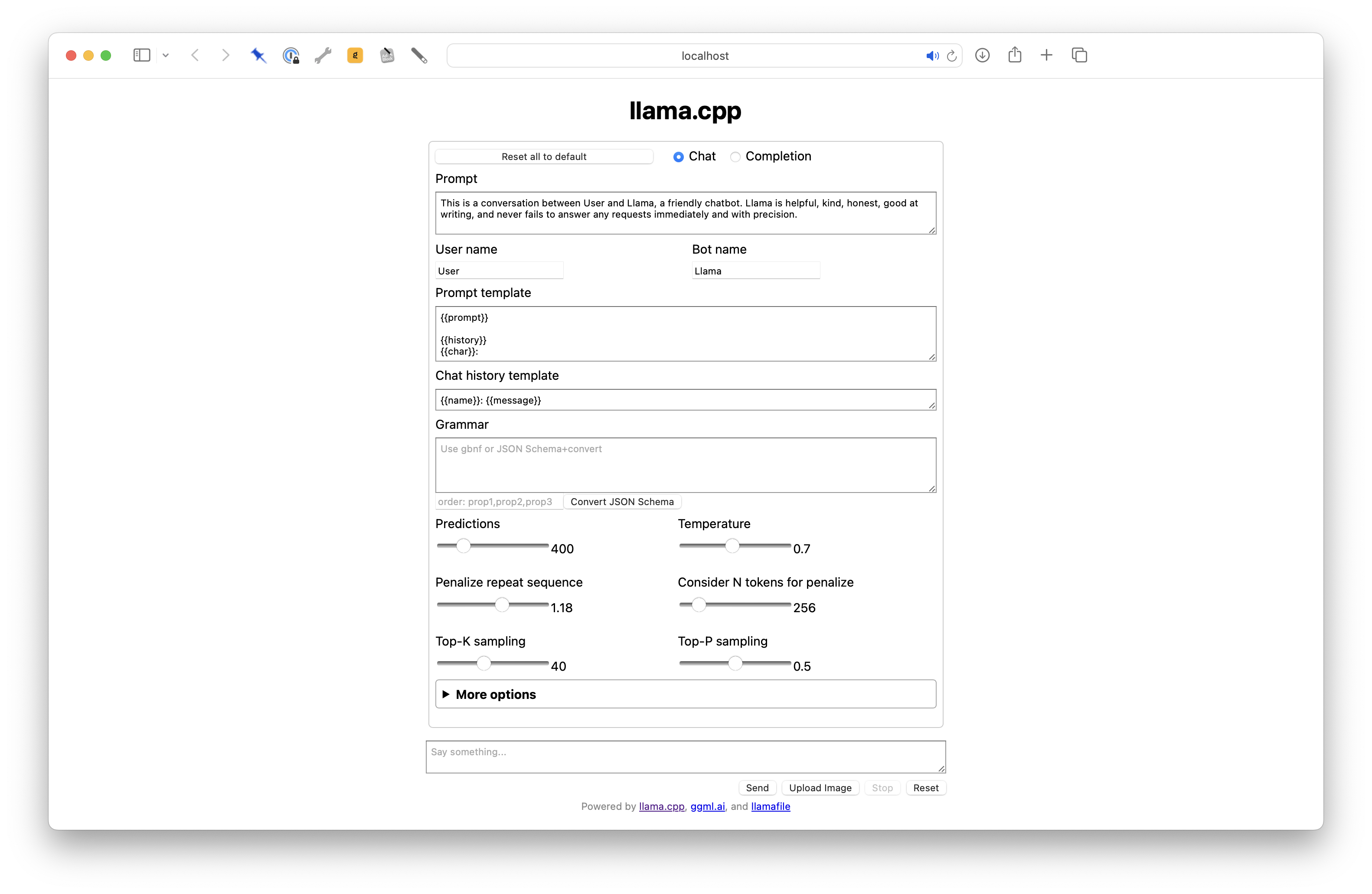

It was from this vantage point that I approached this blog post about llamafile — a project which aims to make running a large language model on most computers really easy — with interest. It repackages a few of the more freely licensed LLM’s in the “llama.cpp” family, using the cosmopolitan libc technique for making a single binary executable runnable on many different computer architectures. What you get is a small (~4GB) server that runs on your computer and presents a vanilla HTML interface where you can chat with the large language model:

Crucially, none of this requires the internet: interactions with the model run locally on your computer. For whatever reason it was this distinction that finally freed my mind to wander a bit…if I can try out an LLM without sending my data to someone else, or without paying someone else, or without slowly sucking some far-flung water aquifer dry, maybe it’d feel possible to do something interesting with it….

Is it possible to talk to my email??

I’ve had an email address since 2002, and have kept most of my emails since then. I don’t really spend time with my deep email archive. I’m mostly sending and receiving emails from the past month, at most. But I’ll occasionally try to remember an old link, or a place, or a story, and find email search to be wanting. Often the very simple reason email search doesn’t work is that my memory remembers something worded one way, but it was worded a different way in an email, and this type of mismatch the breaks fuzzy search logic most email apps use.

I would never in a million years submit any of my emails to a corporate large language model, but running an LLM locally presented an opportunity for seeing how the promise of “private large language models” worked in practice, using my local email archive. Like what if I could talk to my email and remember some place or thing I emailed someone about years ago? Or find a timeless url someone had once sent me? Or find some specific story someone told me once, I think? It’s tempting to picture a large database like “every email I’ve ever sent or received”, and imagine there are gems buried somewhere in there, if I could only find them.

I was specifically inspired by this blog post about “Retrieval Augmented Generation in Go” by Eli Bendersky which describes “retrieval augmented generation”, a technique where you try to ask a large language model a question, but augment the question you’re asking with extra text that’s included by finding semantically similar snippets of text from some large text corpus to the question you asked. I wanted to apply this technique to my local database of emails, so I could ask questions against my archive of emails.

Representing text as vectors

One innovation in large language models is that text can be converted into a mathematical representation called a “vector”, which is a list of floating point numbers with a fixed size. So a given word “hello” looks like this as a vector:

[0.026315663, -0.05107676, 0.052759565, -0.03678608, -0.057748064, 0.033566643, -0.02589281, -0.002132243, -0.028607314, 0.012253743, -0.008096664, 0.001494693, 0.0365746, 0.03807026, 0.009833517, 0.0067754393, -0.010480829, 0.022064133, 0.020115668, -0.037109215, 0.049926486, -0.036568295, 0.0053705918, 0.031117717, -0.032250315, -0.052203, -0.025519572, -0.020293564, -0.033220563, 0.023608679, -0.006456362, -0.004586842, 0.010010897, -0.04201805, 0.015593706, -0.03028678, -0.043785904, -0.03974351, 0.0014129126, 0.047360025, 0.017966205, 0.012411393, -0.015565804, 0.046122417, 0.05755795, 0.018097928, -0.015544698, -0.014457393, 0.0019716504, -0.037025385, 0.034752447, -0.040650655, 0.043754783, -0.00097598345, -0.035391726, 0.0033253669, 0.035139333, 0.024327567, -0.0053036534, 0.00032466973, 0.021560345, -0.0046450747, 0.036632985, -0.04003288, 0.027276658, -0.034950882, 0.027737923, 0.03640247, 0.038598653, 0.006711874, -0.052254688, -0.06056385, 0.06397524, 0.05018992, 0.03146692, -0.03179005, 0.0065816822, 0.031681385, 0.048647005, 0.03895677, -0.05227646, -0.018797494, -0.024809726, -0.034158837, -0.0024025394, -0.008448369, 0.023889156, -0.014096949, 0.053465273, 0.031300355, 0.002865441, -0.005450165, 0.050935287, 0.016651286, -0.01608125, -0.04010522, -0.028432064, 0.03995945, 0.011018825, -0.028760085, -0.013287061, -0.036134444, -0.007604672, 0.02963232, 0.00946132, -0.039779358, -0.0065998007, -0.006972531, -0.06255624, -0.028554522, -0.028519401, -0.046248812, -0.042899422, -0.012204772, -0.046020266, 0.04600531, 0.021571305, -0.036364153, 0.033461068, 0.041704237, 0.05259111, 0.043571096, -0.04007029, -0.034076557, -0.03011038, 0.008948071, -0.04813023, -0.044153288, 0.03518758, 0.056217145, 0.012336162, -0.032382835, 0.019346481, 0.014965278, 0.046533752, 0.046599004, -0.02928571, -0.02224698, -0.010510442, 0.042641334, -0.021578278, -0.040050805, 0.045797728, 0.02277755, 0.049083006, -0.026401268, -0.024383407, -0.025588537, -0.049048226, -0.0531303, -0.042156238, -0.012985709, -0.010362753, -0.018121995, 0.007163994, -0.043389708, 0.023375297, -0.03768581, -0.017458197, 0.050082564, 0.0060853222, 0.027943356, -0.024461797, 0.031332087, 0.037615683, -0.013563662, 0.02029403, -0.014864157, -0.029464258, 0.04442369, -0.029298533, 0.0302472, 0.04715714, 0.022353636, 0.043481253, -0.033672825, 0.0474069, -0.05228587, -0.002790663, 0.024341144, 0.025120774, 0.036285434, -0.00346869, -0.055576056, -0.07371648, 0.03767376, 0.041797392, -0.027872743, -0.030338455, -0.071010545, 0.0006263308, -0.003296338, -0.05668749, 0.041626733, -0.02344105, -0.014074221, -0.048079737, -0.016580561, -0.006270523, 0.031279285, 0.033357352, 0.0117028225, -0.006009747, -0.023284834, -0.012092737, 0.06094602, 0.013674777, 0.003260308, -0.014270174, 0.036602862, -0.004527294, 0.021936249, 0.02703726, -0.006649984, -0.046160154, 0.0054655443, 0.027177623, -0.011909271, -0.0005080942, 0.056488566, -0.037823215, 0.0010502205, 0.028413123, -0.030004766, 0.0102585675, -0.031900134, -0.011743591, 0.0114091, -0.026823547, -0.0132994205, 0.007096897, 0.0055736704, -0.020466903, 0.0010579303, -0.010763015, -0.025727881, 0.03693008, -0.010247399, 0.016443394, 0.032162197, -0.00322929, 0.025612716, -0.0010617772, -0.0045681344, -0.005656379, 0.0038616783, 0.02907526, 0.015015733, 0.046991542, 0.048260894, 0.0037447503, 0.028981335, -0.008149285, -0.013788863, -0.023555005, 0.010223529, 0.02192332, -0.0451934, -0.062838726, 0.026128672, 0.02289665, -0.030275302, -0.063174084, 0.0022732366, -0.022915745, -0.032914564, 0.016041432, -0.012015501, 0.07272382, -0.024313914, 0.028003944, 0.03830679, 0.017905323, -0.04439989, -0.028542832, -0.04374546, -0.029714901, -0.013198032, -0.0040778373, -0.015327487, 0.021371499, -0.0025264495, 0.041654684, 0.03024055, -0.014477172, -0.005203952, -0.017598575, 0.025533067, 0.027074886, 0.035987914, -0.029328384, -0.019238349, 0.060330536, -0.01350854, -0.022097755, -0.01081782, -0.01862954, 0.024826696, 0.05154685, 0.038304742, 0.050340444, 0.017058605, -0.07946641, -0.04604151, -0.026408235, -0.03904443, 0.030384433, -0.07985361, 0.061564326, 0.012700621, -0.012354287, -0.009344623, -0.0367299, -0.07239036, -0.033526517, 0.013479105, -0.014741456, 0.015465579, 0.006340796, -0.041340258, 0.044028617, -0.032779563, -0.04694552, -0.039798666, -0.008055787, 0.0022759913, -0.043846805, -0.005985449, -0.009902096, -0.0156177925, -0.01312619, 0.006933162, 0.056553904, 0.04710293, 0.009497505, -0.020777516, -0.0327266, -0.025073212, 0.012446564, 0.039447058, 0.06872826, 0.03621971, -0.023626817, -0.03655862, 0.013034176, 0.03753551, 0.05189472, -0.0030686557, 0.01195667, 0.045128383, 0.028401954, 0.009839714, 0.010051032, -0.03908404, -0.04388602, -0.013252326, 0.053872455, -0.021344408, 0.02033162, 0.042927306, 0.040674552, -0.010778672, 0.010513371, -0.0024791993, -0.007599492, -0.03129863, 0.033941735, -0.03160518, 0.012811407, 0.03917931, 0.00887006, 0.036761038, -0.0016270209, -0.02900771, -0.020914309, -0.022955302, 0.013110533, 0.037405018, 0.042493112, 0.0029953097, -0.0005984587, 0.025215842, 0.0019286971, 0.0008111912, -0.06537792, -0.02044328, -0.005869833, -0.006807886, -0.0034591414, -0.05074447, -0.017459536, -0.03532829, 0.027767923, -0.026316686, 0.0024302586, -0.037411038, 0.0615568, -0.028561596, -0.005362948, 0.01471921, 0.020184528, 0.02653486, 0.041428342, -0.007413157, -0.04561999, -0.017273037, 0.047322955, 0.051810987, 0.030876957, -0.012946942, 0.0010372113, 0.033227976, 0.0064514694, 0.033085752, -0.013396054, 0.048426185, 0.0075015305, 0.022221081, -0.033596326, 0.0069293217, -0.023342313, -0.012286653, 0.0102367345, -0.0062289997, 0.0281104, -0.022718213, -0.016924072, -0.019212652, -0.001185613, -0.029464584, 0.044396423, -0.0324116, -0.014398765, -0.025774622, 0.055743262, -0.027121518, 0.020674873, -0.00017766615, 0.03619264, 0.019520363, 0.022839574, 0.047789592, 0.005764716, -0.03447098, 0.022432338, -0.043516744, -0.037231553, -0.025048206, -0.009967526, 0.037328403, 0.035044707, -0.004535913, 0.038086124, -0.034116786, -0.046980895, -0.03524534, -0.02570679, 0.035474673, -0.019355258, 0.013432988, -0.028117996, -0.041342087, 0.01409986, -0.03525537, -0.038160156, -0.052420918, 0.01810449, 0.035464697, -0.025294058, 0.010007306, -0.025996357, -0.06924902, 0.028132096, -0.00079841854, -0.013501817, 0.046770174, 0.07517163, 0.037037298, 0.025366541, 0.040248822, -0.028081292, -0.028332917, 0.036714826, 0.007687548, -0.028901538, 0.03839228, -0.027672466, -0.0041911914, 0.048854157, -0.01784227, -0.0155344615, 0.04750416, 0.04405297, 0.024017757, 0.024709102, -0.024437224, -0.03625656, 0.03626268, -0.0119398665, -0.023228755, 0.042166322, -0.017202552, 0.010498574, 0.030785644, -0.042424165, 0.015511501, -0.04409854, 0.021100117, -0.002790288, 0.004432084, -0.014360784, -0.037868485, -0.040606778, 0.0028607904, 0.039088912, 0.032936096, 0.03599776, -0.017276917, 0.020413958, -0.009697305, -0.0479381, -0.02891013, 0.03403221, -0.024198353, -0.03161053, -0.003828878, 0.014621108, 0.06415569, -0.01566947, -0.024424698, 0.010320143, 0.029164797, -0.037783336, 0.033035688, -0.023604764, 0.0006745482, -0.024393523, -0.023095502, -0.018396921, 0.019055322, -0.011880366, 0.023322131, 0.056035183, 0.00030634843, -0.020955907, -0.049658146, -0.03962187, 0.022502886, 0.036499042, -0.029692655, 0.032915078, -0.028775077, -0.011393002, -0.005315213, -0.049632583, 0.070666976, -0.07139168, 0.009008762, 0.019913368, -0.025216734, 0.016907237, 0.033562236, 0.03401224, -0.008816014, -0.037642844, 0.068338215, -0.015326151, 0.024804862, -0.03981009, 0.021049043, -0.016449336, -0.019830056, 0.043424606, -0.010613228, -0.03317898, 0.022078512, 0.008132583, 0.036657564, 0.021471148, -0.04202048, 0.010479801, -0.060896814, 0.0036573336, -0.012137062, -0.009369492, -0.024691008, -0.028375078, -0.03712006, 0.024363784, 0.0619363, 0.0012520632, 0.020621145, -0.030255327, -0.030828038, 0.047324497, 0.033152834, 0.037796646, -0.01434374, -0.066324085, 0.022530057, 0.04724558, -0.018717038, 0.02079031, -0.042318594, 0.012404005, 0.003054884, 0.040080458, -0.007734346, 0.00966154, 0.01965865, -0.02969571, 0.048648365, 0.030942103, 0.03517304, -0.044960428, 0.023147801, -0.013064005, 0.012933487, 0.031137485, 0.043248158, -0.039774954, 0.053235162, 0.033253767, 0.04959841, -0.026097752, -0.013117914, 0.02765747, -0.04861631, 0.042001173, 0.035988443, 0.019028643, -0.0063236253, -0.03546606, 0.05249698, 0.023819618, -0.029397534, 0.0014730253, -0.000116883064, 0.04589052, 0.07982128, 0.042475965, 0.02714497, -0.011290014, 0.048732307, -0.007990668, 0.036892712, -0.05074458, -0.03419913, 0.046826247, -0.0351593, -0.017725315, 0.02825849, -0.02061025, 0.010495187, 0.029973673, 0.013354483, 0.04428554, 0.0059044575, 0.040259574, 0.024635406, 0.056278225, 0.029261485, 0.021040283, -0.02957053, 0.015028589, 0.09915923, -0.006757007, 0.021263221, -0.022744874, 0.03037738, 0.015824845, -0.039941747, 0.024193197, -0.025102578, 0.031861637, 0.04820494, 0.056952294, 0.015798865, 0.012578128, -0.034587458, 0.051569622, 0.036841784, -0.029768696, -0.037315454, -0.004181349, 0.03994207, -0.012483087, -0.019211547, -0.019353691, 0.018520227, 0.00461553, -0.008341581, -0.05549858, 0.05766917, 0.05097321, 0.00880379, 0.013997554, -0.06590693, -0.01869569, -0.042314664, -0.018904256, -0.0055119256, 0.03792496, 0.036814462, 0.013308163, 0.036309067, 0.020966355, -0.0044715456, -0.051457252, -0.0029825429, -0.014860995, 0.0038679296, -0.037870258, 0.032946188, 0.022204902, 0.031311534, -0.0159217, -0.027177777, 0.019132279, -0.0015548733, 0.0062460816, 0.024122085, 0.0013738354, -0.015215801, -0.031390846, -0.008035339, 0.020526154, 0.006488116, -0.0024450996, -0.017090369, -0.039943922, -0.01950265, 0.032263108, 0.035478763, -0.033199288, 0.026933322, -0.027106462, -0.02065646, -0.007509963, -0.050557084, -0.03340465, -0.0047946647, 0.015502574, -0.025161006, -0.0077433935, -0.025955958, 0.0020085182, -0.021800976, -0.009508331, 0.033535887, -0.047463566, -0.058905426, 0.028794395, -0.0077173035, -0.042501763, -0.024379179, 0.017200196, -0.0070375046, 0.019198136, -0.012132133, 0.03652421, -0.039759845, 0.04861978, 0.0030262715, 0.042866085, 0.041402888, 0.017450964, 0.009089696, 0.0028635971, -0.043624565, -0.028436044, 0.014845563, 0.007810105, 0.040422868, -0.01659905, 0.014551624, 0.03692245, 0.008013322, 0.027947398, -0.005875631, -0.0029010554, 0.0076159886, -0.04006688, -0.006206228, 0.0038399713, 0.0630469, 0.035773862, 0.031985953, 0.022648549, -0.020068891, 0.016998352, 0.006821056, -0.02639971, -0.023113638, -0.016550884, 0.04542948, -0.04944595, 4.6349105e-5, -0.030284645, -0.008464625, 0.04505634, -0.0008425875, 0.0018507987, -0.045248747, -0.001249333, -0.027375245, -0.034440503, -0.03445196, -0.016945217, 0.032217544, 0.01201553, -0.011383161, 0.016768109, 0.02209182, 0.04161331, -0.026711816, -0.027969444, 0.013154886, 0.040792376, 0.00037842162, 0.031208977, 0.055764157, -0.041692186, 0.01183059, 0.009995629, 0.011140254, 0.06494206, 0.0007583337, -0.018633584, -0.03988589, -0.06401332, -0.026469348, -0.03703018, -0.009482455, 0.00750478, -0.01196945, 0.0010084544, -0.015276794, -0.028999355, 0.039044295, -0.0015245616, 0.019363733, -0.013175389, 0.020596242, 0.015313282, 0.04776969, 0.03503184, -0.024441065, -0.021466441, 0.03491211, -0.03033822, -0.04221141, 0.043747444, 0.031174233, -0.05234127, -0.00021145339, -0.0108963, -0.02563045, -0.030280393, -0.063621596, -0.0059554386, 0.009598384, 3.800433e-5, -0.011455618, 0.0024069417, 0.034393646, 0.029128842, 0.007318114, 0.051935125, -0.041065566, -0.023579529, -0.015356412, 0.020628927, 0.0016839687, -0.006113899, -0.025948673, 0.011051999, -1.7599392e-5, 0.021779431, 0.021231307, 0.04925588, 0.02865201, -0.03592068, 0.035591897, -0.026523454, 0.009644514, 0.04879437, -0.029754482, -0.030387688, -0.030870467, -0.03533088, -0.02333679, 0.022666639, -0.019431714, -0.036629736, 0.035112843, 0.017431475, -0.017157005, -0.026203807, 0.022084715, -0.012101193, -0.016560372, 0.02747846, -0.036947746, -0.019196276, 0.029935298, -0.05197717, 0.029685955, -0.00030348718, 0.032604396, 0.020966766, -0.044866037, 0.053359862, -0.042657174, -0.0041652545, -0.045802977, 0.013752225, -0.017868387, -0.025728293, 0.034969736, 0.019753583, 0.028519642, -0.025506618, -0.027275596, 0.002548761, -0.021548366, -0.030770132, 0.037810154, 0.039124895, -0.036099177, 0.0067838277, 0.0014933676, 0.03411964, 0.030397482, 0.02907957, -0.013021644, 0.03546133, -0.058428895, -0.028665997, -0.033455126, -0.037742794, -0.0025381332, -0.029671138, 0.027966527, -0.04934853, -0.03034516, 0.02078554, 0.021314679, -0.019340657, 0.008697383, -0.040426604, 0.017037353, -0.009563749, -0.0060880305, 0.026690366, 0.04071305, -0.016738972, 0.0020899752, -0.04395833, 0.0059037167, -0.020659246, -0.055160575, 0.036971394, 0.012827337, 0.023630928, -0.027455963, 0.010689233, -0.020523228, -0.010644282, -0.022099117, -0.05575785, -0.0014715773, 0.045237053, 0.024157247, -0.026763534, 0.004174187, 0.00038428922, -0.036329865, -0.004427296, 0.025025152, 0.04822559, 0.046744928, -0.021798782, -0.031161044, 0.01157757, 0.027121102, 0.013186705, 0.032716304, -0.0059137377, 0.050382566, -0.04728639, -0.030213784, -0.014744704, -0.03136835, -0.008328803, -0.00839062, -0.0036500788, -0.056926843, -0.02807327, -0.01330011, 0.041436, 0.02201358, 0.022166254, -0.03179345, 0.005270372, 0.018509101, 0.014327067, 0.018272892, -0.021296602, -0.03977375, -0.013095145, -0.014545233, -0.009666092, -0.022802576, 0.0005194365, 0.018938834, 0.041110124, 0.046513252, 0.025121529, -0.036493827, 0.04333533, -0.052713536, 0.016992891, 0.017229997]

…where each number in this set of 1024 numbers represents a part of a set of coordinates in a multi-dimensional space. In this case, because the vector “length” is 1024, you can picture “hello” being plotted in a space that has 1024 dimensions rather than two or three. And finding “similar” texts involves doing math to find other texts whose vector coordinates are spatially “nearby”.

How these texts are specifically converted to each of the 1024 coordinates involves math and training of software models, and for the purposes of this project is is a black box to me. Simon Willison has a good blog post about embeddings that gets into more of the details.

Picking a vector database

A frothy part of the “business” of large language models right now are companies building “vector databases”, which provide various ways of storing vectors created from a corpus of text, so that you can later execute search queries that retrieve similar texts using those vectors. Vector databases are useful for retrieval augmented generation, too: take an input “question”, and retrieve some number of similar texts from a vector database, feeding the question + those similar texts as a prompt to the LLM.

I was glad to find that there’s a pgvector extension that adds vector storage and search capabilities to the postgres SQL database. pgvector exposes a new vector(int) column type for storing embeddings, and allows you to retrieve similar text by comparing the cosine distance between vectors stored in the database and an input vector:

-- this enables the pgvector extension

CREATE EXTENSION IF NOT EXISTS vector;

-- this creates a table which will store a snippet of "content"

-- as a text column, along-side the fixed-length vector

-- representation (in this case, with a length of 1024)

-- called an "embedding"

CREATE TABLE IF NOT EXISTS chunks (

id bigserial PRIMARY KEY,

content text,

embedding vector(1024)

);

-- searching for content using pgvector uses "cosine

-- distance" math to compare the distance between two vectors,

-- which in this query are provided by the vector stored in

-- each row and the input vector provided at query

-- execution time

SELECT

content,

1 - (embedding <=> $1) AS score

FROM chunks

ORDER BY

embedding <=> ($1)

LIMIT 5;

Populating a vector database using llamafile

With postgres and pgvector in hand, I needed to create and populate a postgres table like this with vectors for all of the text in my emails. This required going on a bit of a journey…

I use the native Mail.app MacOS application for my email, and ended up needing to write some code to leverage the undocumented sqlite database and file storage layout Mail.app uses to store email texts, so that I could retrieve the string contents of emails matching criteria like “the last 10,000 emails in my inbox and sent messages”.

I’d initially hoped to create vectors for email texts by making requests to the /embeddings API exposed by the llamafile server (embeddings are also used by the LLM itself). Unfortunately, I found that the vectors produced by this endpoint don’t work for cosine similarity searches — the vectors appear to be tuned for a question-answering use-case, where prediction of “next token” (e.g. what happens at the end of a sentence) is more important than semantic similarity of the overall sentence.

Populating a vector database using llamafile Go

The next best thing was running a different model to produce embeddings locally. The go-to library for generating embeddings is the sentence-transformers Python library. I’d initially hoped to use a Go library github.com/nlpodyssey/cybertron instead of Python, to try to better understand some of the abstractions that have developed around libraries like sentence-transformers without also having to wrap my head around Python at the same time.

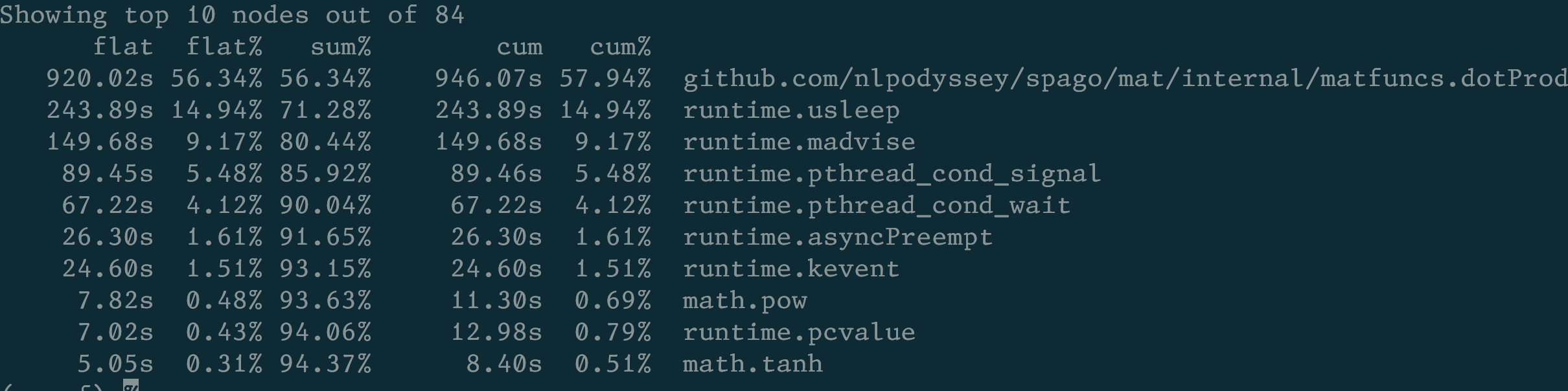

Here’s an implementation of a CLI utlity from cybertron in Go thats converts input texts on stdin into output vectors on stdout, using the all-MiniLM-L6-v2 model that maps text to a 384 vector space. This worked well for vectorizing chunks of texts and inserting them into my database, but was quite slow! Here’s the results of running Go’s profiling pprof tool for a given run of vectorizing 100 or so sentences:

The slowest function call, clocking in at ~15 minutes in aggregate, is for a low-level math function that does “dot product” math somewhere in the bowels of the vector translation process. This was when I came to appreciate the specific role GPU’s play in AI: the cybertron Go library runs on the CPU rather than the GPU, and is really slow as a result!

Populating a vector database using llamafile Go Rust

Higher level languages like Go or Python run on the CPU, which can do some of the math used in AI tasks like text embedding calculations, but do it much more slowly. The way to do this math really fast is to have it run on a GPU, which requires software that can talk to lower-level GPU driver API’s like CUDA (for Nvidia GPU’s) or Metal (for Apple Silicon GPU’s). One popular library that does some of this GPU driver wrangling for common AI tasks is called PyTorch. It also has a C++ library called libpytorch that’s linkable by other language runtimes which want to embed it.

I was still interested in avoiding having to implement my database population code in Python…I don’t really have a good excuse for why. I wasn’t able to find any well-maintained Go wrappers for the libpytorch library, so I found a Rust library for libpytorch that can be paired with another library called rust-bert to calculate text embeddings on the GPU. With an implementation for how to populate my vector database now written in Rust and using libpytorch under the hood, I was seeing vector embedding calculation taking something like 10 seconds on the GPU, where previously it had taken around 15 minutes on the CPU.

Putting it all together

The code now consists of:

- A Go binary that initializes an empty vector database in postgres, queries the Mail.app database for emails, and breaks emails into smaller sentence-like chunks of text, storing those chunks in postgres without associated embeddings vectors.

- A Rust binary that queries the postgres database for chunks of text that haven’t yet been vectorized, sends those text chunks off to the GPU for vectorization (using

libpytorch), and stores the resulting vectors back in the database. - A running instance of

llamafile, providing programattic access to an LLM. - Another Go binary that still uses the CPU-bound

cybertronpackage to generate a vector embedding for a question, queries the postgres database for similar texts to the question using cosine similarity, and feeds the question + resulting texts into thellamafile’s'/completionendpoint, returning the LLM’s predicted result.

You can see all of the code here.

Does it work?

The setup is sort of complicated, but it runs OK! Does it actually work as a way to talk to my email? Here are some real questions/answers I tried, with some editorial context:

Question: When was harolds birthday?

Answer: Harold’s birthday is on ABCDEF 1AB, as mentioned in the email.

So far, so good! The emails returned here were from a recent birthday party, and the specific month/day was correct.

Question: when was harold born

Answer: Harold was born on ABCDEF 1AB, 1947.

In this instance, the LLM can’t quite hack it, and the date is completely wrong (Harold is a toddler and wasn’t born in 1947). The day/months themeselves seem to be derived from dates of real emails I sent/received, but the year looks like it was hallucinated.

Question: where did i live in france

Answer: Based on the emails provided, it appears that Jeff Sisson lived and worked in Rennes, France for a period of time. He was teaching English to French students at a high school while also working on his own projects such as writing and creating content for his website “ilikenicethings.com”.

This summary is pretty accurate, if making my life at this time seem more little boring than I remember it!

Question: who did i live with in france

Answer: You lived with Alisa and Jivko in France.

Correct.

Question: what was the name of the show double happiness did

Answer: Based on the context provided, it seems that Double Happiness is an online platform or a website that has been inactive since 2015. The founders were Jeff Sisson and Borna Sammak, with Ricky Laska joining them shortly afterward. There are emails exchanged between these individuals discussing the site’s status and potential revival. However, I cannot provide specific details about any TV show or movie titled “Double Happiness” without further information.

This is mostly accurate, though I think based off the computers read of a single email I forwarded to someone at some point, that the LLM didn’t quite read in full (e.g. omitted Bennett, for example!). It’s also kind of funny that the LLM assumed Double Happiness was likely to be some flavor of intellectual property.

Question: what hikes did we do in death valley

Answer: We went on a hike at Badwater Basin and another one near the salt pans.

I’ve certainly been to these places with Kathryn, but don’t know that I’d have described them as hikes — this appears to be the model hallucinating.

Question: what hikes have i gone on

Answer: Based on the emails provided, it seems that Jeff Sisson is interested in finding a good winter hike for him and Tom Bubul. They are considering options such as Old Croton Aqueduct trail or Bull Hill. Additionally, they discussed other potential hikes like Arden Point and Glenclyffe, Ken Lockwood Gorge and the Columbia Trail, and Long Walk at Palisades Park. However, it is unclear if any of these specific hikes have been actually gone on by either person.

This is true and based on a real email! But one of many hiking emails I’ve sent or received that were matched, and the only one the LLM cared to reference, for some reason.

Thoughts on how this works

Some general observations, having played around with this:

- The results are more accurate when the LLM specifically mentions “based on the emails provided”. I’m not yet sure if there’s a better way to tell the LLM to only do this.

- I’m finding the emails returned via similarity search to be as much or more interesting than how the LLM interprets them.

It seems like the similarity search and LLM both struggle a little with the format of an email, where any given individual message rarely tells the full story about what’s being discussed. Unlike, say, a website, an email presumes a lot of prior context not written into the email itself, and that’s kind of what’s beautiful about emails. Maybe these tools will get better at inferring that type of missing context, but it seems as likely that they won’t, and there will remain some forms of human communication that will be resistant to machine interpretation.